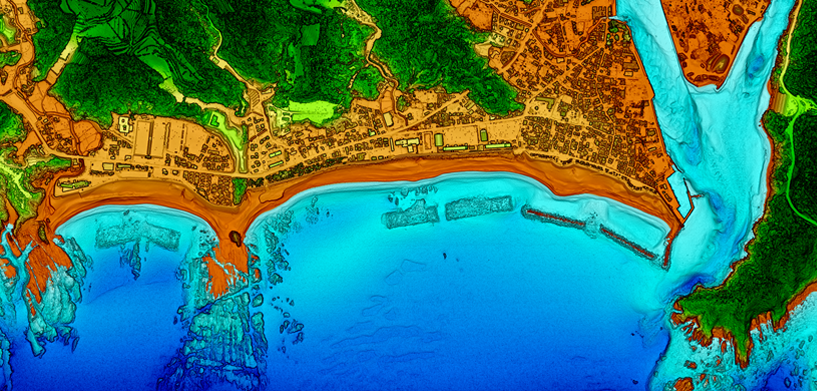

Despite some conspiracy theories, our world is not flat — nor the objects on top. However, most of our situational awareness systems are still in 2D, primarily driven by the sensors that set the foundation for the spatial context. Without some heavy computation of that data, you can’t create a 3D situational awareness system in close to real time.

With the introduction of laser scan sensors (LiDAR) — not for target tagging, but for data acquisition — the scenery is changing. While such sensors can’t be applied every time and everywhere, data from LiDAR inherently owns the third dimension, which offers new analytics and strategies. A new compartment on a ship could be better analyzed concerning its capabilities when the volume can be measured or better estimated. Going into suburban battlefields, the third dimension provides important strategic and operative elements when utilized in the right manner.

The downside is that one gets more points from the scans than a normal system can handle. Even worse, the only intelligence of the point is the color (if enriched by a classical electro-optical sensor) and the 3D coordinate. The sheer number of points makes the information useless unless we can structure it and extract information automatically.

AI is one important methodology for classifying and segmenting the resulting point cloud in order to derive insights out of those “dummy” objects. Besides recognition of solid objects (like houses) or vegetation, one can extract human activity layers or specific objects in the former unstructured cloud.

Watch the video to learn more about the AI tools necessary to transform unstructured data into structured data and visualization for situational awareness systems, including data fusion in 3D of different formats and sensors.